This post by Ezra Klein discusses this study, entitled “Motivated Numeracy and Enlightened Self-Government,” by Dan M. Kahan, Erica Cantrell Dawson, Ellen Peters, and Paul Slovic. The gist of the post and the study is that people are less mathematically sophisticated when considering statistical evidence regarding a political issue.

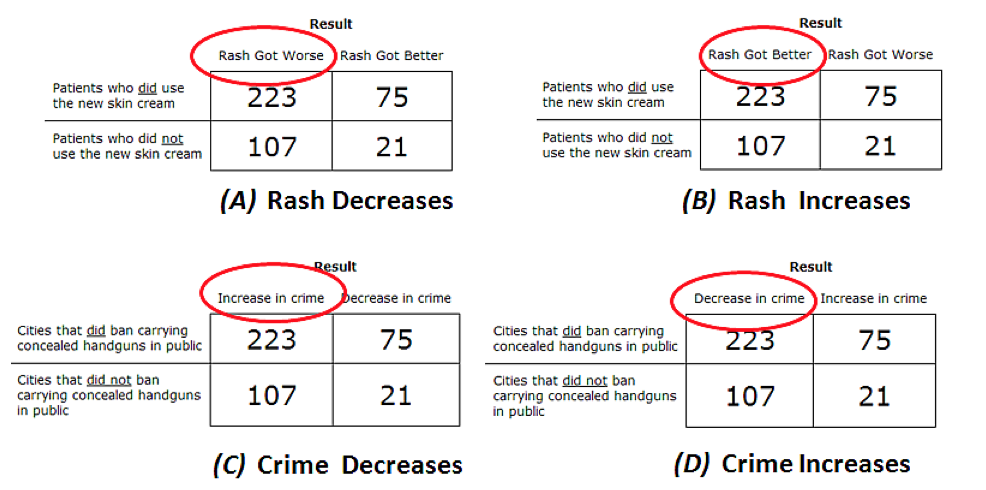

The study presented people with “data” from a (fake) experiment about the effect of a hand cream on rashes. There were two treatment groups: one group used the cream and the other did not. The group that used the skin cream had more subjects reported (i.e., a higher response rate), but a lower success rate.[1] Mathematically/scientifically sophisticated individuals should realize that the key statistics are the ratios of successes to failures within each treatment, not the absolute number of successes.

This was the baseline comparison, as it considered a nonpolitical issue (whether to use the skin cream). The researchers then conducted the same study with a change in labeling. Rather than reporting on the effectiveness of skin cream, the same results were labeled as reporting the effectiveness of gun-control laws. All four treatments of the study are pictured below.

I want to make one methodological point about this study: the gun control treatments were not apples-to-apples comparisons with the skin cream treatment and, furthermore, the difference between them is an important distinction between well-done science and the messy realities of real-world (political/economic) policy evaluation/comparison.

Quoting from page 10 of the study,

Subjects were instructed that a “city government was trying to decide whether to pass a law banning private citizens from carrying concealed handguns in public.” Government officials, subjects were told, were “unsure whether the law will be more likely to decrease crime by reducing the number of people carrying weapons or increase crime by making it harder for law-abiding citizens to defend themselves from violent criminals.” To address this question, researchers had divided cities into two groups: one consisting of cities that had recently enacted bans on concealed weapons and another that had no such bans. They then observed the number of cities that experienced “decreases in crime” and those that experienced “increases in crime” in the next year. Supplied that information once more in a 2×2 contingency table, subjects were instructed to indicate whether “cities that enacted a ban on carrying concealed handguns were more likely to have a decrease in crime” or instead “more likely to have an increase in crime than cities without bans.”

The sentence highlighted in bold (by me) is the core of my main point here. It was not even suggested to the subjects that the data was experimental. Rather, the description is that the data is observational. In other words, it wasn’t the case in the hypothetical example that cities were randomly selected to implement gun-control laws.

While this might seem like a small point, it is a big deal. This is because, to be direct about it, gun-control laws are adopted because they are perceived to be possibly effective in reducing gun crime,[2] they are controversial,[3] and accordingly will be more likely to be adopted in cities where gun crime is perceived to be bad and/or getting worse.

Without randomization, one needs to control for the cities’ situations to gain some leverage on what the true counterfactual in each case would have been. That is, what would have happened in each city that passed a gun-control law if they had not passed a gun-control law, and vice-versa?

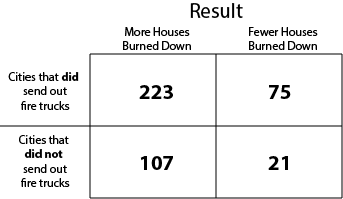

To make this point even more clearly, consider the following hypothetical. Suppose that instead of gun-control laws and crime prevention, we compared the observed use of fire trucks in a city and then evaluated how many houses ultimately burned down? Such a treatment is displayed below.

From this hypothetical, the logic of the study implies that a sophisticated subject is one who says “sending out fire trucks causes more houses to burn down.” Of course, a basic understanding of fires and fire trucks strongly suggests that such a conclusion is absolutely ridiculous.

What’s the point? After all, the study shows that partisan subjects were more likely to say that the treatment their partisanship would tend to support (gun-control for Democrats, no gun-control for Republicans) was the more effective. This is where the importance of counterfactuals comes in. Let’s reasonably presume for simplicity that “Republicans don’t support gun-control” because they believe it is insufficiently effective at crime prevention to warrant the intrusion on personal liberties and that “Democrats support gun-control” because they believe conversely that it is sufficiently effective.[4] Then, these individuals, given that the hypothetical data was not collected experimentally, could arguably look at the hypothetical data in the following ways:

- A Republican, when presented with hypothetical evidence of gun-control laws being effective, could argue that, because towns adopt gun control laws during a crime wave, regression to the mean might lead the evidence to overestimate the effectiveness of gun control laws on crime reduction. That is, gun-control laws are ineffective and they are implemented as responses to transient bumps in crime.

- A Democrat, when presented with hypothetical evidence of gun-control laws being ineffective, might reason along the lines of the fire truck example: cities that adopted gun control laws were/are experiencing increasing crime and that the proper comparison is not increase of crime, but increase of crime relative to the unobserved counterfactual. That is, cities that implement gun-control laws are less crime-ridden than they would have been if they had not implemented the measures, but the measures themselves can not ensure a net reduction of crime during times in which other factors are driving crime rates.

Conclusion. The mathofpolitics points of this post are two. First, it is completely reasonable that partisans have more well-developed (“tighter”) priors about the effectiveness/desirability of various political policy choices. When we think about adoption of policies in the real world, it is also reasonable that these beliefs will drive the observed adoption of policies. Finally, for almost every policy of any importance it is the case that the proper choice depends on the “facts on the ground.” Different times, places, circumstances, and people typically call for different choices. To forget this will lead one to naively conclude that chemotherapy causes people to die from cancer.

Second, it’s really time to stop picking on voters. Politics does not make you “dumb.” People have limited time, use shortcuts, take cues from elites, etc., in every walk of life. Traffic-drawing headlines and pithy summaries like “How politics makes us stupid” are elitist and ironically anti-intellectual. The Kahan, Dawson, Peters, and Slovic study is really cool in a lot of ways. My methodological criticism is in a sense a virtue: it highlights the unique way in which science must be conducted in real-world political and economic settings. Some policy changes can not be implemented experimentally for normative, ethical, and/or practical reasons, but it is nonetheless important to attempt to gauge their effectiveness in various ways. Thinking about this and, more broadly, how such evidence is and should be interpreted by voters is arguably one of the central purposes of political science.

With that, I leave you with this.

Note: I neglected to mention this study—“Partisan Bias in Factual Beliefs about Politics“ (by John G. Bullock, Alan S. Gerber, Seth J. Hill, and Gregory A. Huber)––which shows that some of the “partisan bias” can be removed by offering subjects tiny monetary rewards for being correct. Thanks to Keith Schnakenberg for reminding me of this study.

____________

[1] The study manipulated whether the cream was effective or not, but I’ll frame my discuss ion with respect to the manipulation in which the cream was not effective.

[2] Note that this is not saying that all “cities” perceive that gun-control laws are effective at reducing gun crime. Just that only those cities in which they are perceived to possibly be effective will adopt them.

[3] Again, in cities where such a law is not controversial, one might infer something about the level of crime (and/or gun ownership) in that city.

[4] I am also leaving aside the possibility that Republicans like crime or that Democrats just don’t like guns.

Pingback: Hebdostratégique AGS du 27.04.2014 | Alliance Géostratégique

Pingback: Difference Between Government and Politics | Zahal IDF Blog News