I am a game theorist. I love thinking about situations as strategic interactions.

As a game theorist, I make assumptions all the time. (And I assume you do, too, in whatever you do. THAT’S META.)

In political science, there are, broadly defined and in my estimation, four categories of theory. Game theory (which includes mechanism design) is one, of course. The oldest of the four is typically simply called “political theory” or, more precisely, normative political theory within the discipline, and—while it is a decidely and wonderfully big tent—essentially can be described as work focused on theoretical concepts such as justice, fairness, & democracy, and how they should be measured. (That “normative” political theory is about measurement is my take—the usual framing of the research is “this concept implies that one should do the following.” If you think about it for a second, the implication of accepting such a conclusion is “adherence to this concept implies that you will do the following,” by which you can measure (from behavior, for example) how much someone values, or at least adheres to, this concept.)

The third category is known as social choice theory. This category includes studies of what is possible to achieve. There are a lot of ways this inquiry can be carried out. Examples include “can we always find a fair choice,” “is it possible to design a voting system that always rewards honesty,” or “can we combine people’s preferences to make a sensible public decision?” This field has been declared dead by more than a few social scientists, ironically due to a handful of noteworthy (and Nobel prize-winning) achievements, including Arrow’s (im)possibility theorem. That is not, in fact, dead is proven by much current research, including the forthcoming book authored by Maggie Penn and myself, Social Choice and Legitimacy: The Possibilities of Impossibility.

Finally, a fourth category has emerged over the past forty years or so (beginning with the works of Schelling, Axelrod, and others). This area, computational modeling, develops and analyzes mathematical models of behavior and institutions to derive empirical predictions about political phenomena.

While open hostilities are luckily rare, the four categories do not “get along,” generally. This is both silly and a shame. It’s a shame because the four areas have a lot of opportunities for profitable and though-provoking collaboration. It’s silly because they have a lot in common (independent of their extant potential synergies). In particular, “all four are theory.” That is, the best work in each of the four values and embodies clarity and, to the degree available, parsimony.

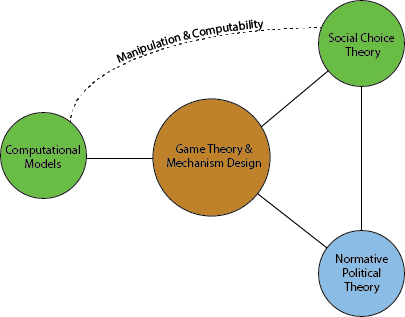

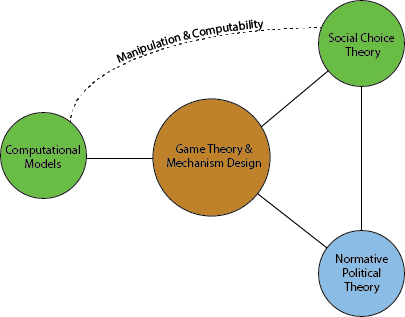

In the interest of parsimony I have drawn a picture of (at least some of) their connections. I have put game theory/mechanism design at the middle of the graph because, even if that’s not right, it instantiates the old adage that “the blogger draws the maps.”

This is a picture I drew…OF THE WORLD OF THE MIND.

Very quickly, why the distinctions between the four categories? Well, I have been thinking about them—and I am comfortable describing myself as “actively” working in all four areas—and I thought it might be useful (if only for me) to work through a quick description of the lines in the picture.

Normative political theory is, by and large, completely concerned with internal consistency.[1] In this sense, it is linked with game theory and mechanism design and social choice theory: all three categories highly value what is known as a “fixed point,” which describes a solution to a problem that, when put forward as the solution to the problem as described by the theory, does not then lead to a different solution being produced.

Social choice theory is concerned with generality and, relatedly, agnosticism. (There are important exceptions to this, such as in this article by Maggie Penn, Sean Gailmard, and myself.) That is, much of social choice theory tackles the tough questions of what can be achieved/guaranteed with as minimal restrictions on “who may show up” or “what people might want/believe” as possible. The link between social choice theory and normative political theory is hopefully clear: much normative political theory either in essence argues for what one should want or believe or works out how one should act given what one wants or believes, while most social choice theory finds what can happen when we can’t or don’t want to restrict what people want or believe.[2]

Normative theory and social choice theory are each less concerned with the details of the specific situation: they are more general than game theory.[3]

On the other end of the generality spectrum is (the vast majority of) computational models. Most computational models require specification of the parameters of a particular situation to generate (often very precise) predictions. If one has this specification at hand, (the right) computational models offer a direct path to the desired outcome: a prediction and the ability to start tinkering.

Part of the power of computational modeling is its deliberate setting aside of what I call epistemological concerns. Put another way, a good computational model is not required to be internally consistent with respect to individual or group motivations. That is, and this is often properly trumpeted as a strength of computational modeling but, in my opinion, for the wrong reasons, computational models do not necessarily yield what a game theorist would call “equilibrium outcomes.” In other words, there is no reason to suspect that a computational model of a given political situation would remain accurate once the model was shown to one or more reflective agents involved in that situation.[4]

I’ll conclude now by restating my point bluntly: each of these are political theory. And, yes, game theory is at the middle: it requires specification of more details than social choice and normative theory (which I would argue, if pressed, truly differ only in their languages) and it requires “more of itself” in terms of internal consistency than is (appropriately) the norm in computational modeling.

Like I’ve said before about other analogous “comparisons,” asking which of these is the best is like asking whether a screwdriver is better than a hammer. It depends on whether you want to screw someone or simply hit them over the head.

With that, I leave you with this.

____________

[1] There are lots of great normative arguments that encounter the dirty feet of reality, but that is to the field’s credit, as opposed to a defining quality, in my opinion.

[2] Note that this illustrates an important category of empirical implications of social choice theory: social choice results inform one about the degree to which one can infer anything about the preferences and/or beliefs of a group of individuals from the decisions and choices one observes the group make.

[3] Mechanism design is “in the middle,” as Austen-Smith and Banks make clear in their incredible treatises (volume 1 and volume 2). That is, mechanism design asks, in essence, what general goals/outcomes can a group achieve through the proper design of a specific institution (i.e., “game”).

[4] I won’t discuss it here, but the dotted line in the figure connecting social choice and computational models reflects a very active area of research that asks when, how, and whether such reflective individuals might be able to change their behavior to make themselves better off.

Like this:

Like Loading...