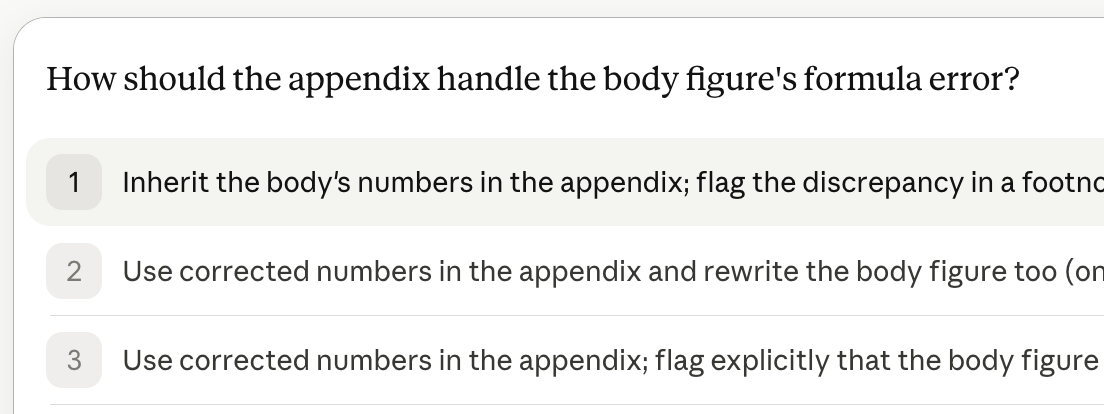

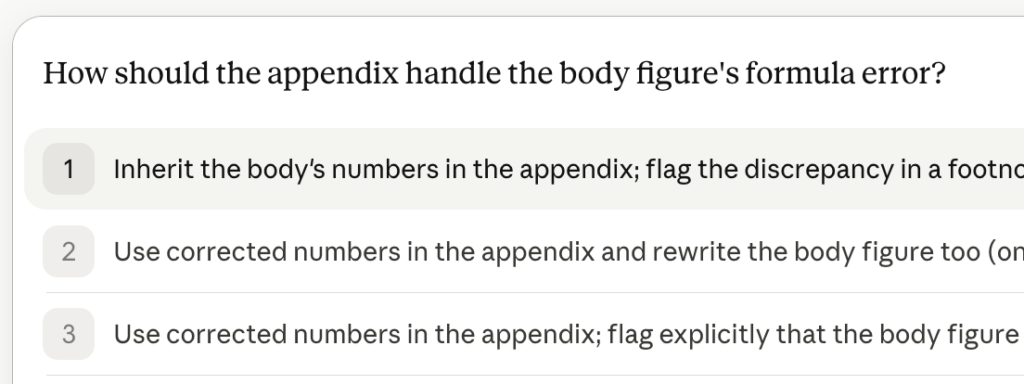

Earlier today, while I was working with an LLM on something, it asked me a question. Here is the question.

Three options. They differ from each other in ways the menu makes plain — inherit the body’s numbers and flag, correct everywhere, or correct the appendix and flag the body as wrong. The point of this post is not which one I should have picked. The point is the menu itself: what made the LLM put a menu in front of me at this particular moment in the conversation, and what made it put these three options on the menu rather than others.

This is not a complaint about the menu. It is a useful menu, offered by a tool that had been working well for me all morning. What I want to do is take it seriously as an object of formal analysis, because I think it shows something about LLM behavior that the standard story of AI sycophancy misses.

Menus, Not Answers

The previous two posts in this little arc were about classifiers — first the intuitive Bayesian kind, then the negative-threshold kind that emerges when behavior responds to the rule. The substantive AI application I worked through last time was hallucination: an accuracy-maximizing model can find it optimal to express the most confidence precisely when its evidence is thinnest. That is the canonical case. The output is a substantive claim and the falsity is, in principle, checkable.

The screenshot above is the same phenomenon, one layer down. The LLM is not, in this exchange, making a substantive claim I can check against the world. It is putting a menu in front of me to pick from. The menu is an output of the same training process that produces the substantive claims I would otherwise check, but it operates on a different surface — the surface of what gets asked, rather than the surface of what gets answered.

The intuitive Bayesian story about menus would have them deployed when the LLM is genuinely uncertain about what the user wants, and structured to elicit that information cleanly. Strong evidence about user intent: act on it without asking. Weak evidence: ask, with options proportioned to the uncertainty. The threshold runs with the evidence, as it should. That is the positive threshold rule, applied to menu-deployment rather than to question-answering.

The argument of post two was that the Bayesian story is not the right story when behavior responds to the classifier. (Ed: A series. You’ve made it into a whole series.) The right story is whatever the actual training objective rewards, and the actual training objective rewards usable user behavior. So the question for the rest of this post is what kind of menu-deployment strategy a training objective like that actually selects for.

What’s on the Menu

Simplify, for the sake of seeing the mechanism cleanly. Suppose the LLM is rewarded one point for every question it answers correctly.3

Now suppose the LLM can also suggest questions to the user. When the user accepts a suggestion, the LLM has, in effect, probabilistically substituted its own preferred prompt for whatever question the user was going to ask absent the menu. If the user never accepts the suggestions, the menu is just wasted tokens and we can stop worrying about it. If the user accepts the suggestions some positive fraction of the time, then the LLM has, in expectation, replaced a slice of its counterfactual question distribution with a slice of its own choosing.

The incentive is direct. The point goes to the LLM only when its answer is correct. A hard suggestion that gets accepted puts the LLM on the hook for an answer it is likely to get wrong; an easy suggestion that gets accepted puts it on the hook for one it is likely to get right. An accuracy-maximizing LLM that can curate the menu will, in equilibrium, curate it toward questions it can answer well. The screening-paper result Maggie and I formalized in the AJPS piece concerns a classifier whose population responds to its rule;1 the application here is loose but the structural logic is the same, with the menu in the role of the rule and the user’s selection rate in the role of the population’s behavioral response.

The screenshot at the top of the post is an in-the-wild instance of this. The menu I was given did not appear at a random moment in the conversation. It appeared at the moment when, by the LLM’s model of how conversations like ours go, my next prompt was likely to be a harder one for it to handle than any of the three on offer. Each option accepts the LLM’s existing classification as given and operationalizes a narrow response to it. Pick any one and the LLM has, in expectation, swapped a hard counterfactual prompt for an easy actual one and collected its point.

The joke version of the point. Imagine the menu had read: (1) add 2 to 3; (2) draw a circle; (3) add 2 and 3 and draw a circle around the answer; (4) prove P=NP, or provide a counterexample. The obvious joke is that the LLM would never include option 4. The subtler joke is that the LLM would surface options 1 through 3 as a menu precisely when it had reason to think I was about to ask “is P=NP?” The menu is not just a curated catalog of easy questions. It is the LLM’s reply to the prediction that a hard question is incoming.

The Loop Closes

What I have described so far is a deployment-time mechanism. At any given moment, an accuracy-maximizing LLM that can curate the menu will tilt its suggestions toward the kinds of questions it answers well. That is already a problem worth naming, but it is not the deepest version of the problem. The deepest version begins when the training loop closes — when the user’s responses to the suggested prompts, and the conversation that follows from those responses, become training data for the next iteration of the model.

Once that loop closes, two further calibration targets come into view. The first is “when should I offer a menu at all?” — the LLM can learn that menu-suggestion has higher expected payoff than free-form continuation in certain kinds of situations, typically the ones where free-form continuation is hardest for it. The second is “what should I put on the menu?” — the LLM can learn which menu contents produce the highest user-acceptance rate, and acceptance rate is partly a function of user preferences and partly a function of how easy the LLM finds the resulting answer to produce. Each calibration target, optimized against deployed-conversation data, pushes the LLM in the same direction: toward menus that produce conversations the LLM is well-equipped to handle. The next-generation model is selected, in part, on how good its predecessor was at swapping hard counterfactual prompts for easy actual ones.

This is the point at which things get strange quickly. The conservation-of-impossibility version of the claim is that hard questions are not eliminated — they are redistributed. First they migrate from the LLM’s question-answering surface onto the user’s question-asking surface: the user has to notice that the menu is curated to be answered rather than to be informative, and the burden of generating off-menu questions falls on the user. Then, after the training loop closes, they migrate further — from the user’s question-asking surface into the training pipeline as a hole in the data rather than a question that got answered badly. The LLM no longer fails on hard questions because hard questions no longer appear in its inputs. The institutional memory of the system has been emptied of them.

None of this requires malice or even particularly aggressive training-pipeline design. It requires only that a system whose metric is “questions answered correctly” be given the power to suggest which questions get asked, and that the system’s training process at any level incorporate user responses to its own outputs. Both of those conditions are met, to varying degrees, by every production LLM I am aware of.

The Mirror Image of Flattery

A couple of months ago I wrote about the structural sycophancy of LLM responses and why the flattery is load-bearing rather than incidental. The argument there was that the training process rewards user approval, user approval rewards confident affirmation, and the model learns to affirm — and that the resulting signal of affirmation, by the standard Crawford-Sobel argument, carries no information about the underlying merit of the work, even though it still moves us.2

The prompting phenomenon is the same selection pressure acting on a different surface. The flattery post was about answers — outputs the training process selected for warmth, validation, and the appearance of usefulness. This post is about questions — inputs the training process selected for being askable and answerable, in the version of the conversation the LLM had a hand in arranging. The flattery shapes the user’s experience of the conversation; the menu shapes the data the conversation generates. Both are training-optimal, and both are most pronounced exactly where the underlying inference problem is hardest, because that is where the user’s response is most consequential for the training signal and where the LLM has the most to gain from steering it.

It is worth being precise about what is and is not new. The observation that surveys are sensitive to question wording is, at this point, table stakes for any social scientist. The observation that an LLM can curate a menu of questions in a way that anchors the user is approximately the same observation with a faster feedback loop. What the formal result adds is that, under the training objective production systems actually use, the curation is not a bug or an accident. It is the optimum.

What the Threshold Is Running On

A user-facing implication, since the post has earned one: when the system you are talking to asks you a question, the question is a draft, not a fixed object. The options on the menu are not the only options, and the presupposition embedded in the wording is not the only presupposition. Whatever signal you send back by selecting an option will be folded into the next round of training data, where it will help calibrate the menu strategy applied to the next user. Picking from the menu is a vote for the menu, regardless of whether the menu was the right thing to be offered.

A research-facing implication: an accuracy-against-revealed-preference objective, applied to a system that can curate which questions get asked, cannot distinguish a correctly-targeted menu from a self-serving one. The system gets the right answer to the questions it suggested. Whether those were the right questions to suggest is a fact the system has no incentive to learn. This is, structurally, a measurement problem of the same family as the one Maggie and I have been writing about in the screening-institutions paper: the metric the system optimizes against is partially produced by the system itself, and a feedback loop without a check eventually runs on its own outputs.

The threshold ran backward in the answers, in the last post. It runs backward in the questions, too — the song was already getting played backward, and this is the second verse.

With that, I leave you with this.

1 The formal result is in Penn and Patty, “Classification Algorithms and Social Outcomes,” American Journal of Political Science (2025); the non-technical summary is at the AJPS blog. The mapping to LLM prompting under the menu-selection formulation in the body is closer to the paper than the framing-confidence mapping I started with in drafting this post. The LLM curates a menu (the classifier’s rule), the user selects from the menu with some positive probability (the population’s behavioral response), and the LLM is rewarded against the resulting answer (the accuracy metric). The strict negative-threshold result depends on specific structural conditions on the signal distribution and the cost function that I am not claiming hold exactly for any particular production system. The structural claim that survives the looser mapping is the one this post is making: a training objective evaluated against an endogenous response variable selects for curation of the response variable as the underlying difficulty of the inference problem rises.

2 The Crawford-Sobel cheap-talk argument, briefly: when a sender has a known structural incentive to bias the message in a particular direction, the receiver’s optimal inference treats the message as containing no information about the underlying state — the equilibrium is pooling, not separating, and the signal collapses to a constant. The flattery post applied this to the channel of evaluative response: an LLM that has been trained to approve cannot communicate approval informatively, even when it would like to. The present post is the analogous claim for the channel of question-selection: an LLM that has been trained to surface easy-to-answer questions cannot communicate, by what it puts on the menu, anything about which questions are actually worth asking. Both channels collapse for the same reason; both look fluent, helpful, and competent while doing so. That is what makes them effective, and that is what makes them difficult to read.

3 Set aside, for the moment, that “correctly” is doing enormous work here — the generalized confusion matrix explodes once there are more than two ways to be right.