Hi again! The question I’m about to pose is one that, I’m reliably informed, clears rooms at cocktail parties.1 But I think it sits at the foundation of why institutions are so hard to reform — and why the people who try to reform them so often end up making things worse. That’s for next time, though. Today, I want to talk about games.

Taking Your Ball and Going Home

Here’s a scene everyone recognizes. Two kids are playing a game — basketball, say. One of them is losing. So he picks up the ball, says “this is stupid,” and goes home (note: he never says, “I forfeit the game,” maybe he was in a hurry?) Anyway, pragmatically at least, “uhh, game over.” Sounds like a lot of (mostly less fun) games I have played in life. (I won’t tell you which character I was playing, but I will admit/confess that I have played both “roles,” so to speak. I’m a “double threat,” I suppose. Is that a compliment to myself?)

Now: what just happened, strategically? Within the rules of basketball, there is no explicit provision for this exact situation. Instead, the “rules of basketball” understandably tell you “what happens” when you shoot (depending on whether it “goes through the hoop,” for example), when you foul, when the clock runs out. They do not tell you what happens when a player picks up the ball and leaves the court, never to return. This action is, formally speaking, outside the game. Your first instinct might be: “Well, obviously — he loses. He quit.” And that’s a perfectly reasonable/”practically accurate” interpretation. But notice that “he quit, and therefore he loses” is your (and, yes, most of society’s) inference, not the rules’ literal interpretation.

To make this less ethereal, suppose instead the kid says, “I’m so sorry — my parents are here, I have to leave!” Should that kid lose because of his parents’ timing/schedules? (And, in spite of my inclinations, no, “don’t be a stickler right now.” Yes, that’s about to get “ironic AF”.)

The rules of basketball define how you score and how the clock works; they don’t contain a general provision for “a player decided to leave and never come back.” You’re filling the gap with common sense — and common sense, as we’ll see, is doing a lot of heavy lifting that the formal rules cannot. Let me push on this with a darker example, because I think it reveals something important.

The Penalty Ceiling

Suppose, in the course of an NBA game, you want to prevent an opponent from scoring. You could commit a blocking foul. You could commit a hard foul — a flagrant foul, in the NBA’s terminology.2 The NBA distinguishes two levels: a Flagrant 1 (“unnecessary contact”) gets you two free throws and possession for the other team, while a Flagrant 2 (“unnecessary and excessive contact”) adds an ejection. That’s where the ladder ends. There is no Flagrant 3. So: what if, instead of committing a hard foul, you grab the opposing player and strangle him? Within the formal rules of basketball, the in-game consequence is… [flips through pages speedily….] well, it’s identical to a Flagrant 2 foul. Ejection. Two free throws. Possession. The rules literally cannot distinguish, in terms of game outcomes, between a very hard basketball play and attempted murder. Everything above the Flagrant 2 ceiling looks the same to the game. Criminal law handles the strangulation, of course — but that’s an external enforcement system, a different “game” entirely. Within the four corners of basketball’s rules, the marginal in-game cost of escalating from a hard flagrant to actual assault is zero.3

Now, you might (yes, quite reasonably) think: “Fine, but no one actually strangles an opponent during a basketball game. The criminal law deters that.” True. But the fact that you need to invoke an entirely separate system of rules (here: “the rules of the legal system”) to handle actions that are physically possible within the game is essentially precisely the point. From a logical perspective, the rules of the “game of basketball” themselves have a ceiling,4 and above that ceiling, deterrence vanishes.

This matters beyond basketball. Consider: why have police unions historically resisted making the penalty for assaulting an officer as severe as the penalty for killing one? It’s not squeamishness. It’s strategy. If assaulting a cop carries ten years and killing a cop carries life, then a suspect who has already committed the assault faces an enormous marginal cost for escalating further. The gradient protects the officer. But if both carry life? The marginal cost of escalation drops to zero. A suspect who has already crossed the assault threshold faces no additional deterrence against killing. The punishment structure only deters escalation when there’s room to escalate into.

The general principle: any finite penalty schedule creates a flat region at the top where marginal deterrence fails. And raising the ceiling doesn’t solve the problem — it just moves the flat region higher. You haven’t eliminated the zone where deterrence vanishes; you’ve simply changed where (i.e., “conditional on what action?”) the deterrence “has its bite.”

And there’s a second problem with “if you do X, you lose” — one that is, if anything, even more fundamental. Everything I’ve said so far implicitly assumes a two-player game. In a (zero-sum)5 two-player game, “you lose” means “your opponent wins,” and since you have exactly one opponent, this is unambiguously bad for you. The fix might fail for other reasons, but at least it’s a punishment. Add a third player and even this breaks down. “You lose” no longer determines who wins — it just removes you from contention. And the question of which remaining player benefits from your removal is now a strategic variable. If you prefer Player C to Player B, and your continued participation is helping B more than C, then losing is not a punishment — it’s a gift to your preferred outcome. “If you break this rule, you lose” becomes, in effect, “if you break this rule, you get to kingmake.”6 The penalty has been tranformed from a deterrent into a strategic instrument, and, having assigned a definite/predictable outcome to the violation in question, the rules have no way to prevent (or, somewhat ironically, deter) this type of behavior. They did exactly what they were supposed to do. The problem is that what they were supposed to do “isn’t enough” — or more appropriately, they are not incentive compatible within the game itself.

This is not that exotic, of course. In sports, it’s called tanking: a team deliberately loses late-season games to secure a more favorable draft pick or dodge a stronger playoff opponent. In elections, it’s strategic withdrawal: a candidate drops out not because they can’t win, but to determine who among the remaining candidates does. In legislatures, it’s the entire logic of strategic voting and logrolling.

Simple and universal point: whenever “a game” has three or more players, even the declarative “you lose” outcome is no longer necessarily the worst possible outcome. How you lose, and when you lose, and who benefits from your loss are all strategic variables that the rules have handed you.7 The penalty, intended to close the game, has opened it. (Readers of this blog will note the family resemblance to a certain famous theorem about what happens when you have three or more alternatives: it sort of rhymes with “Mia Farrow.” We’ll come back to this.) I want to convince you that this problem is not trivial at all. In fact, I think it’s a deep problem, one that connects to some of the most important results in mathematics, and political economy.

The Chessboard, Overturned

Consider chess. Chess is, compared to basketball in the driveway, a remarkably well-specified game. The rules define every legal move, every legal position, and every terminal outcome (checkmate, stalemate, draw by repetition, and so on). Chess even has a formal provision for one action that might seem “outside” the game: resignation. If you tip over your king, the game ends and your opponent wins. Clean, elegant, formally complete. But now imagine a player who, upon finding herself in a losing position, sweeps all the pieces off the board and onto the floor. What happened? Not a resignation — she didn’t tip her king. Not a checkmate. Not a draw. The rules of chess, so carefully specified, have nothing to say about this. And here’s what’s interesting: it’s not obvious what they should say. The most natural response — the one most people jump to — is: “Well, obviously she loses. Flipping the board is just resignation with theatrics. We can infer that she wanted to concede and was simply… efficient about it.” And in a single game of chess, maybe that resolution works well enough. But notice what it’s doing: it’s interpreting a physical action (scattering pieces) as a strategic action (resignation) by reasoning about the player’s intent. The rules of chess say nothing about intent. We’re filling the gap with inference — and inference, as we’re about to see, opens its own can of worms.

The Game Within the Game

Here’s where it gets interesting (Ed: …Finally?). Suppose our chess player isn’t playing a single game. She’s playing a best-of-seven match. She’s down a game, and the current game — game 3 — is going badly. She has two options within the formal rules: play on to the bitter end, or resign. But these two options are strategically different in the context of the match, even though they produce the same outcome in game 3 (she loses). Playing to the bitter end reveals information — about her style, her preparation, her responses to specific positions — that her opponent can exploit in games 4 through 7. Resigning early conceals that information. Accordingly, the timing and manner of her concession is itself a strategic variable, one that the rules of chess (which govern individual games) don’t acknowledge at all. The match is a game; each game within the match is a game; and the two levels interact in ways that neither level’s rules fully capture. Now: is it “legitimate” for a player to play badly — or concede early — in game 3 in order to improve her chances in game 4, 5, 6, and/or 7? While I play chess, I’m not serious at it (Ed: you mean you’re not that good at it?) That said, I suspect that most chess players would say this offends the spirit of competition (to understand why, ask yourself, “does anybody think being described as tanking something is a compliment?) But the rules of a best-of-seven match, as typically specified, say nothing about it. We’re back in the gap between what the rules formally cover and what is physically (and strategically) possible.

What Poker Understands

This is a good moment to note that at least one common game does understand the problem we’re circling around — or at least one important dimension of it. In standard Texas Hold’em, when all of your opponents fold, you win the pot. You may then show your cards to the table, but you are explicitly not required to. This is a rule about information, and it is one of the rare cases where a game’s designers grasped that the strategic management of private information is itself part of the game. Whether you show a bluff, show a strong hand, or show nothing at all is a decision with consequences for future hands — and the rules protect your right to make that decision. Most rule systems are not nearly this sophisticated. They either ignore the information dimension entirely (chess doesn’t care (or, more accurately, is realistic about the fact that it “can’t measure”what you were “thinking” about doing) or — and this is the case that will matter most for us — they try to compel disclosure, and immediately discover that compelled disclosure is extraordinarily hard to enforce.

Belichick’s Injury Reports (and Other Mendacities)

Which brings us to the NFL, and to a man who made a career out of finding the gaps between what rules say and what rules mean. The NFL requires teams to publicly disclose player injuries before each game. The purpose is transparent: betting markets, opposing teams, and fans should have access to the same basic information about who’s healthy and who isn’t. The rule was designed to “level the playing field” — to prevent teams from gaining a strategic advantage by concealing private information about their own roster. This is, on its face, a reasonable rule. It is also exactly the kind of rule that is most vulnerable to manipulation, because it attempts to regulate something — private information — that the regulator cannot directly observe. The NFL can see what a team reports. It cannot easily verify whether the report is accurate. And so Bill Belichick, with characteristic precision, listed half his roster as “questionable” every single week. Technically compliant. Informationally useless. The rule required disclosure; Belichick disclosed — in a way that conveyed nothing. The spirit of the rule was defeated by the letter of the rule, and the letter couldn’t be tightened without creating new problems. (What does “accurate” mean? Must a team disclose a player’s private medical details? Who adjudicates disagreements about severity?) Notice the irony: the injury disclosure rule was created specifically to prevent teams from “gaming the game” with private information. But the rule itself became the game that got gamed. This isn’t a bug in the NFL’s rule-writing process. I think it’s a theorem — and we’re about to see it again.

Belichick’s Safety

Let me give you a second Belichick example, because one might be an anecdote but two starts to look like a pattern (and, yes, I am both a proud Tarheel and Steelers fan, so I am not “unbiased” with respect to Billy B). In a 2003 NFL game, Belichick’s New England Patriots were leading the Denver Broncos late in the game. Facing a 4th down deep in their own territory, the conventional play would be to punt. But Belichick did something that, at the time, struck many observers as bizarre: he had his punter intentionally run out of the back of the end zone, conceding a safety — two points for Denver. Why? Because a safety, unlike a punt, is followed by a free kick from the 20-yard line, which typically travels farther and is harder to return than a punt from deep in your own end zone. Belichick wasn’t breaking any rules. He was following them. But he was exploiting a feature of the rule mapping — the relationship between safeties and free kicks — that the rules’ designers almost certainly never intended as a strategic option. The rules said: “if a safety occurs, the following happens.” They assigned an outcome to the event. And that assigned outcome, in the right circumstances, made deliberately causing the event profitable. This is not a curiosity. This is a theorem.

Gibbard-Satterthwaite, in Football Pads

The Gibbard-Satterthwaite theorem, one of the foundational results in social choice theory, tells us (informally) that any sufficiently rich system of rules that isn’t dictatorial — that is, any system where more than one person’s actions matter — is manipulable. There exists some situation in which some agent can achieve a better outcome by acting contrary to the system’s intended purpose. Both of Belichick’s exploits are Gibbard-Satterthwaite in football pads. The NFL’s rules are “sufficiently rich” (they cover a complex, multi-agent strategic environment) and non-dictatorial (both teams’ actions matter). So the theorem guarantees that there exist situations where a team can benefit by doing something the rules didn’t envision as a strategic choice. The intentional safety was always there, latent in the rule book, from the moment the safety/free kick provision was written. The meaningless injury report was always available, from the moment the disclosure rule was written. It just took decades — and a coach who modeled the game differently than the rule designers — to find them. And notice the computational point: these exploits were hard to find. Not hard in the sense of requiring genius (though Belichick is a genuinely brilliant strategic mind), but hard in the sense that the space of possible rule interactions is vast, and most people never think to search it. The manipulability is guaranteed by theorem; the discovery of any particular manipulation is a search problem of potentially enormous complexity.

The Trilemma

Now let’s go back to our ball-taker and our chessboard-flipper and think about what a game designer could do about these “outside” actions. I think there are exactly three options, and none of them is satisfactory.

Option 1: Leave the action outside the game. The rules simply don’t address it. This is the status quo for chessboard-flipping. The game is formally incomplete: there exist feasible actions with no assigned outcome. This might seem acceptable — we handle these situations with social norms, tournament rules, or just the general understanding that you’re not supposed to do that. But “not supposed to” is doing an enormous amount of work here, and it’s not part of the formal game. We’ll come back to this.

Option 2: Assign the action a bad outcome. “If you flip the board, you lose.” This is the most natural response, and it’s what most rule systems try to do — define penalties for rule-breaking. But here’s the problem: the moment you assign an outcome to an action, you’ve brought that action into the game. It’s now part of the strategy space. And once it’s part of the strategy space, it interacts with everything else. Belichick’s safety is exactly this: the rules assigned an outcome to the “bad” event of a safety, and that assigned outcome, in interaction with the rest of the rules, made the event strategically attractive. The injury report is a subtler version: the rules assigned a requirement (disclose) with a penalty (fines, draft picks) for noncompliance — and in doing so created a new strategic question (how to comply in form while defecting in substance) that didn’t exist before the rule did.

Worse, any newly incorporated action can be used as a threat. “Trade with me or I flip the board” is now a meaningful strategic statement, because “flip the board” has a formally defined consequence. You’ve just enriched the game in ways you may not have intended. And recall the multiplayer problem from earlier: even the seemingly nuclear option — “if you do this, you lose” — is only a deterrent when the game has exactly two players. The moment there are three or more, “you lose” becomes a strategic instrument rather than a punishment, because the violator gets to influence who among the remaining players benefits. This is not a minor caveat. Most real-world “games” — legislatures, markets, regulatory environments, organizations — have many players. In these settings, Option 2 doesn’t just fail because penalties create new strategic possibilities. It fails because the maximum penalty — total defeat — is itself a strategic resource. The penalty schedule cannot be made severe enough to deter a player who would rather kingmake than compete. There is, quite literally, no “bad enough” outcome to assign, because the badness of the outcome for the violator is not the relevant quantity — the relevant quantity is the differential effect of the violation on the remaining players, and the rules cannot control this without controlling the entire game, which is the problem we started with.

This, I think, is where the blog’s namesake result makes its quiet entrance (Ed: I just knew you were into “branding”). The two-player case is well-behaved: there’s one opponent, preferences are opposed, and penalties can work (modulo the ceiling problem). Add a third player — or a third alternative — and the structure changes qualitatively. Stability dissolves. Manipulation becomes ubiquitous. Three implies chaos.

Option 3: Define an external enforcement mechanism. “There’s a referee, and the referee handles situations the rules don’t cover.” This works — until you realize that the referee’s judgment is itself a rule system. What are the rules governing the referee? Can a player “go outside” the referee’s rules? If so, you need a meta-referee. And meta-meta-referee. You’ve begun an infinite regress — or, if you prefer, you’ve acknowledged that the game is embedded in a larger game, which is embedded in a larger game, and somewhere the buck has to stop at a system that is, itself, formally incomplete.

Why This Matters (or: Gödel Was Here)

If the “trilemma” above reminds you of something, it should (Ed: Oh goodness, is this another “truels post“?). Gödel’s incompleteness theorems tell us, roughly, that any formal system rich enough to express basic arithmetic cannot be both consistent and complete. There will always be true statements that the system cannot prove from within.

The analogy to games is, ahem, more than an analogy (is there a word for “X is analogous to X,” beyond “tautological” (Ed: Not that tautologies have ever stopped you before). A “self-enforcing” rule is one where breaking that rule is never incentive-compatible, given the other rules of the game. This is another way of understanding “internal consistency,” for those of you playing at home.

To verify that a rule is self-enforcing, you need to check it against all other rules and all possible strategies — which is itself a statement within the system. And for any sufficiently rich game, the system cannot verify all such statements from within. There will always be some actions, some contingencies, some interactions that the rules cannot “reach” without expanding the system — at which point you’ve created a new system with new gaps. A game, in other words, cannot fully know its own rules. It cannot certify, from within, that all of its rules are self-enforcing. There will always be a kid who can pick up his ball and go home, and the game — qua game — has nothing to say about it.

A more tangible way of understanding this: any interesting game must have some rule X that the other rules of the game that define “winning the game” must sometimes give you an incentive to break “rule X.”

I now dub that the Billy B Rule and it expands far beyond American Football, Chapel Hill, and indeed time and space itself! (Ed: Seriously? ….Oh, what the hell, if they’re still reading, let’s go for it, I guess.)

The Impossibility Migrates

I want to close (Ed: What? Oh, I thought you were just getting started.) by suggesting that what we’ve identified is not merely a curiosity about games. It’s a conservation law. The trilemma says that the “gap” in a rule system — the space between what the rules formally cover and what strategic agents can actually do — cannot be eliminated. It can only be relocated.

You can leave it as incompleteness (Option 1), and accept that some actions have no formal consequence.

You can try to close it by assigning penalties (Option 2), and discover that the gap reappears as manipulation — new strategic possibilities created by the very rules you wrote to prevent the old ones.

Or, you can hand it off to an external enforcer (Option 3), and watch the gap reappear one level up.

In any event, the problem is conserved; it just changes form. This pattern — call it the migration of impossibility — shows up far beyond sports and parlor games.

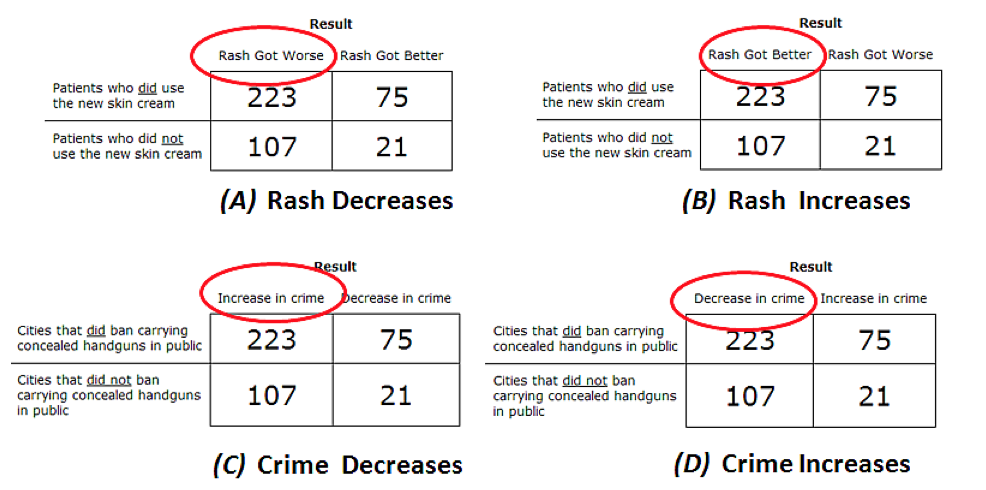

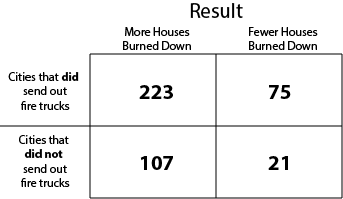

The “Hook”: Consider algorithmic fairness. There’s a well-known result (due to Kleinberg, Mullainathan, and Raghavan, and independently to Chouldechova) showing that two natural fairness criteria — error-rate balance and predictive parity — are generally incompatible when different groups have different base rates of the behavior the algorithm is trying to predict. This is, in its structure, an impossibility theorem of the same species as the ones we’ve been discussing: you can’t have everything you want, simultaneously, within the system.

Now, in some recent work that Maggie Penn and I have been doing, we noticed something. The classical impossibility results hold behavior fixed — they assume that people’s base rates of compliance (or recidivism, or default, or whatever the algorithm is classifying) are just facts about the world, not choices that respond to incentives.

But of course they are choices that respond to incentives, and in particular they respond to the stakes of classification — the severity of the fine, the length of the sentence, the terms of the loan. Once you recognize that base rates are endogenous — that they’re equilibrium objects shaped by the algorithm and its consequences — an escape route from the impossibility opens up. You can simultaneously achieve error-rate balance and predictive parity by adjusting the stakes of classification to induce equal base rates across groups.

Cool, …problem solved, right?

Not quite. Here comes the conservation law. The statistical impossibility disappears, but it migrates: achieving both fairness criteria requires that identical classification decisions carry different consequences for different groups. You’ve moved the inequality from the distribution of algorithmic outcomes to the severity of consequences attached to those outcomes. The impossibility doesn’t vanish. It changes address. And it gets worse — in a way that connects directly to the penalty-ceiling problem. In some cases, equalizing base rates under equal stakes requires penalizing compliance — effectively setting negative incentives that suppress the behavior the system is supposed to encourage.

That’s the fairness equivalent of flattening the penalty gradient between assault and murder. You’ve “equalized” the treatment, but you’ve destroyed the incentive structure that was generating the behavior you wanted. The gap migrates, again, from one form of unfairness to another.

I think this is a general feature of any system that tries to regulate strategic behavior. The gap between what the rules intend and what agents can do is not a deficiency of any particular set of rules. It is a structural property of the relationship between rules and the strategic agents who inhabit them. Fix it here, and it appears there. Close this loophole, and you open that one. The impossibility is conserved.

A Provocation for Next Time

So if the impossibility always migrates — if every fix to a rule system creates new gaps somewhere else — then what does this mean for the biggest, most complicated “games” we play? What does it mean for institutions, bureaucracies, governments? It means, I’ll argue, that every well-functioning institution is riddled with informal patches — norms, workarounds, conventions, and practices that exist precisely to handle the cases the formal rules can’t reach.

These patches are the institution’s solution to the migration problem: every time a gap was discovered, someone — a bureaucrat, a judge, a middle manager — found a way to cover it, and that patch became part of the operating system. The institution looks messy from the outside because it is messy. It has to be. The formal rules can’t do the job alone, and the patches are where the real work happens. And it means that anyone who looks at those patches and sees only waste, inefficiency, or evidence of a “deep state” is making a very specific error: they’re assuming the game is complete, when we just showed it can’t be.

They’re treating the messiness as a bug, when it is — often, not always, but far more often than reformers tend to appreciate — a feature. There’s also, I think, a deeper thread here about information — about the fact that rules governing who knows what, and who must disclose what to whom, are a particularly fragile species of rule. Poker understands this; the NFL tried and largely failed; and some of our most important legal infrastructure (think §6103) exists precisely at this fault line. But all of that is for next time. (Ed: Oh, you’ll be back…like in 2016? Sheesh.)

For Now, I Leave You with This

In the 1983 film WarGames, a military supercomputer called the WOPR is tasked with simulating global thermonuclear war. It plays every possible scenario — every first strike, every retaliation, every escalation — searching for one that ends in victory. It finds none. After cycling through the entire game tree, it arrives at a conclusion: “A strange game. The only winning move is not to play.” (Ed: I could make a joke about your blog, but I think you already see it, dammit.)

The WOPR, in other words, did what the trilemma says can’t be done: it verified, from within the game, that the game has no self-enforcing solution. It searched the space, hit every penalty ceiling, found every flat region at the top, discovered that every “winning” move triggers a retaliation that migrates the problem somewhere worse — and concluded that the game is, in our terms, formally incomplete.

There is no outcome the rules can assign to “global thermonuclear war” that makes initiating it incentive-incompatible (Ed: Thank goodness, …right?), because the penalty structure maxes out at “everybody dies,” and at that ceiling, the marginal cost of escalation is zero. Of course, the WOPR had an advantage we don’t: it could search the entire game tree. For the rest of us — playing games whose rules we can’t fully verify, in institutions whose patches we can’t fully see, against opponents whose strategies we can’t fully anticipate — the only honest starting point is to admit that the game is bigger than its rules. With that, I leave with one (dated, but memorable, and timeless) question: “Shall we play a game?”

- He didn’t inform me of this, but my friend and coauthor Tom Clark essentially encouraged me to write this up some months ago. ↩︎

- Note the “subtle shift” here: I moved from “basketball” to “basketball as governed by” (or, to quote James Scott’s awesome work: “made legible by” a specific institution that, ahem, “provides basketball to the public for their enjoyment and remuneration.” ↩︎

- And here’s an additional wrinkle: the NBA’s rules say that no team may be reduced below five players. If a player fouls out (six personal fouls), but there are no eligible substitutes, that player stays in the game and is charged with a personal foul, a team foul, and a technical foul for each subsequent infraction. So ejections are actually the only mechanism that can force a team below five — which means our strangler has, in addition to getting himself tossed, potentially inflicted a roster-count penalty on his own team. But note: this is the same roster-count penalty he’d have inflicted with a garden-variety Flagrant 2 for an overly aggressive screen. The punishment doesn’t scale with the severity of the act. (And even the “stay in the game with a technical” rule is itself manipulable. If your player just picked up his sixth foul with 30 seconds left in a close game, is the team better off keeping him on the court — where every subsequent foul triggers another technical free throw for the opponent — or just… letting him leave and playing 4-on-5? The rule was designed to protect teams from being shorthanded. But in the right circumstances, the “protection” costs more than the problem it solves. We’ll see this pattern again.) ↩︎

- Speaking of “ceilings,” I am tempted to ask what Naismith would have thought of physical “ceilings” in laying out the initial rules of basketball. Don’t know if he was a physicist or even that “sophisticatedly rational” to think about it, but I would suppose that he would have eventually agreed that “having a ceiling over the game” where you throw a ball up high to avoid defenders’ hands would “only complicate” the eventual performance (and adjudication) of his new game. This makes think of both XFL and Arena Football: both are fun, partly because they borrowed some of the elements of an “already legible sport” (i.e., American Football) and “slightly modified” the nature of the constraints in that sport…) ↩︎

- For simplicity, let’s just think about “games” where there can be no more than one winner. That a lot looser than “zero-sum”in a formal sense, but with two players, it’s basically without loss of interesting generality (and, yes, I am an American, and I do (in my heart) think “ties are boring.” But that’s maybe why, or because, I find faculty meetings generally unsatisfying. There’s a lot in there, I know.) ↩︎

- I think the idea that “kingmaking” is a recognized verb should make all of us think more about the nature of language in both analytical and sociological terms. ↩︎

- I say “the rules” have “handed you” this to differentiate it from very real, “expressive” feelings of guilt or failure from being labeled “a loser.” Just ask our president DJT. The only thing he hates more than rules is being (or, it seems, being associated with) “a loser.” ↩︎