A Vice President at a large technology company sits down at the end of the quarter to evaluate her engineering team. She has twelve direct reports. They have spent the quarter using AI tools heavily, as the company’s leadership has insisted they do. She must now write performance reviews. She has to say, in writing, which engineers used the AI well and which did not.

She does not know how to do this. The reason is the same reason she hired the engineers in the first place.

If she could tell which of her engineers’ judgment calls were the right ones, she would not have needed them. She would have made those calls herself. The whole structure of expertise-based hiring rests on the firm’s acknowledgment that it cannot do the work it is buying — that there is a gap between what the firm needs done and what the firm’s leadership knows how to do, and that the gap is precisely what the hires are meant to fill. The hire presupposes the inability. The evaluation, if it actually evaluates the wisdom of the work, presupposes the ability. Both cannot be coherent at once.

This is not a small inconsistency. It is a logical contradiction at the heart of how every firm with expert employees has to operate. The firm cannot live with the contradiction stated plainly — we hired people to do work we cannot evaluate — because that statement makes evaluation impossible, and evaluation is the spine of how firms allocate raises, promotions, and continued employment. So the firm reaches for proxies. Surely something about the work can be measured. Surely something on the dashboard can stand in for the wisdom the VP cannot directly assess.

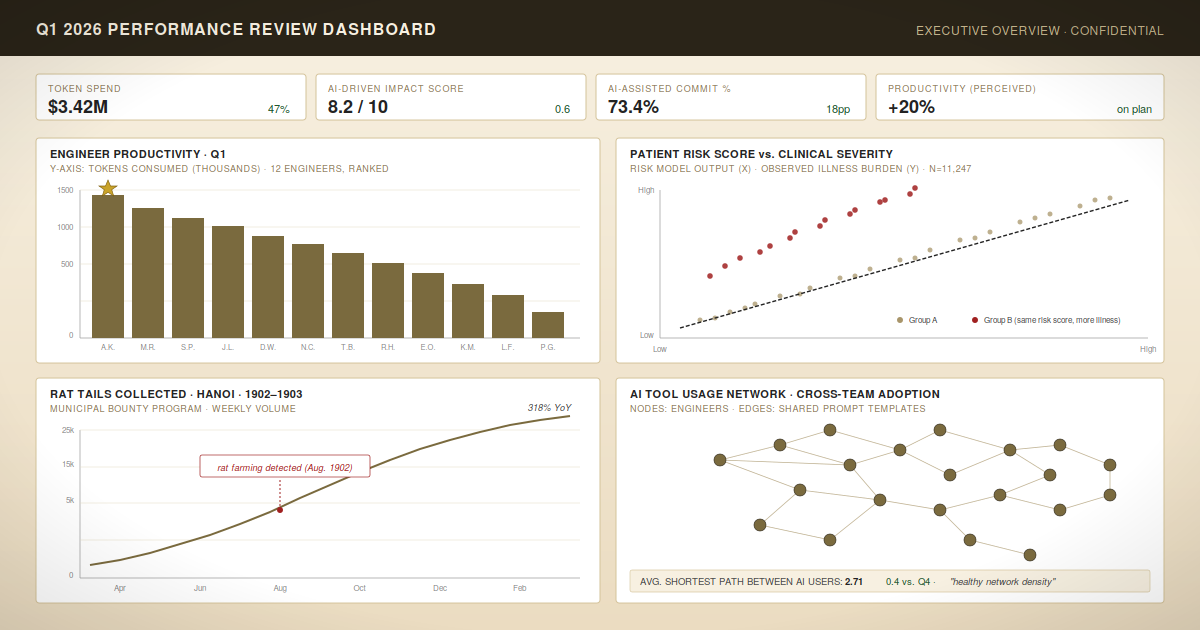

The company’s tooling helpfully provides token counts. AI-assisted commit percentages. Quarterly spend per engineer. At Meta, an internal system called Checkpoint aggregates more than 200 data points per engineer — lines of code generated with AI tools, error rates, bugs traced to AI-assisted commits, code written without AI assistance — and feeds the summary into the manager’s review preparation. The metrics arrive on the dashboard as if they were answers to the VP’s question. They are not. They are answers to a different question, which is how much AI did each engineer use? — a question that bears the same relationship to her actual question that prescription count bears to the question of whether a doctor is any good.

Imagine evaluating doctors by prescription count. The physicians at the top of the leaderboard would be the ones writing the most prescriptions. Some would be excellent diagnosticians with complicated patient panels. Others would be running pill mills. The metric cannot tell the difference, because the metric does not know what good doctoring is. It only knows what is countable about doctoring. And the reason the metric does not know what good doctoring is, of course, is the same reason the healthcare system hired the doctors in the first place — the system does not know what good doctoring is, in the case-by-case operational sense that matters, which is why it employs people who do.

This is not only a hypothetical. In 2019, a team led by Ziad Obermeyer published a study in Science1 on a widely deployed hospital algorithm — one used by health systems making decisions about more than two hundred million patients in the United States — that was supposed to identify which patients would benefit most from extra care. The algorithm did not measure who needed extra care directly. It could not. So it measured something it could see: which patients had historically generated higher healthcare costs. The proxy was reasonable on its face. Sicker patients do, on average, cost more. But the proxy was not measuring sickness. It was measuring spending, and Black patients in the United States, with systematically less access to care, generate less spending than equally sick White patients. The algorithm did exactly what it was built to do. It just was not built to do what the hospitals thought it was built to do. The result was that Black patients with the same level of clinical illness as White patients were less than half as likely to be flagged for additional care. The algorithm was not biased in the sense of having race as an input. It was biased in the sense of having bought a proxy in place of the thing the hospital actually wanted to measure — which is, again, the same kind of thing that happens when a buyer cannot do, in-house, the work it is paying others to do.

The pattern is also older than the technology industry by some distance. In 1902, the French colonial administration in Hanoi, faced with a rat infestation, offered a bounty per rat tail turned in. Citizens responded by farming rats. The bounty system was eventually canceled, after which the rat-farmers released their stock into the city, leaving Hanoi with substantially more rats than it had started with.2 The colonial administration is usually cited as a cautionary tale about perverse incentives, which it is, but the more fundamental story is the one that produced the bounty in the first place. The administration could not exterminate rats itself. So it bought rat-extermination from the population, by the tail. The metric was the form the purchase took, and the purchase contained the same contradiction the VP is now confronting: the buyer paying for work it cannot, by construction, evaluate, and therefore paying for the only thing it can count.

(Our engineers used 47% more tokens this quarter than last, the breathless internal memo will read. Productivity gains are accelerating. The future is incredible.)

It is worth pausing on what the actual evidence looks like here, because the evidence is interesting in a way the dashboards are not. A randomized controlled trial published last summer by METR found that experienced open-source developers, working on codebases they had contributed to for years, took 19% longer to complete tasks when allowed to use AI tools than when they were not. The same developers, asked afterward how much faster they had been, estimated they had been about 20% faster. The gap between perception and measurement was nearly forty points. The dashboards at firms like Meta would, in any given quarter, register the AI-assisted developers as having used the AI a great deal. They would not register that the developers had been slower. They cannot. The metric does not see what it was not built to see.

I have written a series of posts on this blog about what I call the junk drawer — the category, in any well-functioning institution, of items that resist clean classification and that the institution honestly acknowledges as such. The argument across those posts has been that the institutions that maintain explicit junk drawers do better than the institutions that pretend everything resolves into legible subcategories. The pretender does not eliminate the uncategorizable; she just relocates it into categories that do not fit, and then makes decisions on the basis of categories that do not fit.

The contradiction the VP is facing puts a particular kind of item in the junk drawer. The drawer’s contents, in this case, are not just hard-to-classify items. They are items the firm created the drawer by buying — the moment the firm decided to hire experts rather than develop the expertise internally, it accepted that some part of its operations would be evaluated only at one remove, by indirect signals and accumulated trust, never by direct inspection of the work. The drawer is not a regrettable feature of the firm’s measurement systems. The drawer is constitutive of what it means to have hired experts at all.

The honest VP acknowledges this. She admits that the question on her quarterly review form — did this engineer use the AI well? — does not have a metric-shaped answer, and that the reason it does not is the same reason the engineer is on the payroll in the first place. Her job is not to evaluate the wisdom directly. Her job is to maintain the conditions under which the wisdom can develop and be exercised, and to make her judgments about the engineer over horizons longer than a quarter, on the basis of whether the team builds things that work and that they understand. The dishonest VP fills out the form using the dashboard.

This is, as it happens, a much harder junk drawer to maintain than most. There are at least two reasons for that, both specific to the kind of tool the AI is and the kind of company that designs it. But those reasons want their own treatment, and they will get it. For now, the small point is that the next time someone tells you their team used 47% more tokens this quarter than last, you might consider asking what, exactly, that is supposed to tell you about anything — and whether the person telling you is in a position to know. Some of the firms that helped build the dashboards are beginning to ask the same question themselves.

(So many beans! Has any team, in the history of teams, ever made a soup with this many beans?)

With that, I leave you with this.

Notes

1 Ziad Obermeyer, Brian Powers, Christine Vogeli, and Sendhil Mullainathan, “Dissecting Racial Bias in an Algorithm Used to Manage the Health of Populations,” Science 366(6464): 447–453 (2019). The algorithm’s manufacturer cooperated with the researchers in confirming the finding and reported that adjusting the predicted target from healthcare costs to a measure incorporating actual health needs reduced racial bias in outcomes by roughly 84%. The case is, among other things, an instance of an institution buying a tool whose internal logic it did not fully understand from a vendor whose own incentives were not the institution’s incentives — a structural feature that warrants its own treatment in a later post.

2 Michael G. Vann, “Of Rats, Rice, and Race: The Great Hanoi Rat Massacre, an Episode in French Colonial History,” French Colonial History 4: 191–203 (2003), is the canonical academic treatment. The episode is now standard in the literature on perverse incentives, sometimes under the heading of the “cobra effect.” The colonial archive is, as colonial archives often are, more revealing about the colonizer than about the rats.